We have a number of different background simulations in our experiment involving neutrons. We are exploring whether we need to use the LEND database or not, and have uncovered an unexpected conflict. For our physics, we are using the Shielding physics list, which gives us NeutronHP by default.

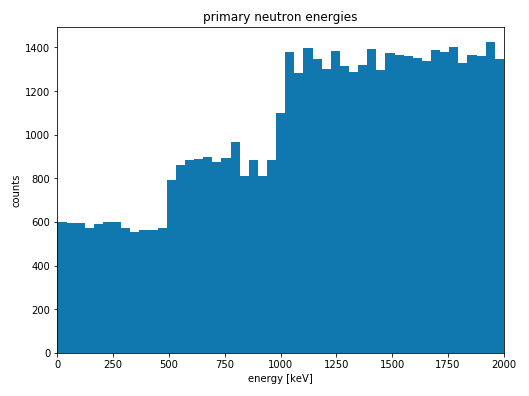

In order to both quantify backgrounds, and to model detector calibration with a Cf-252 source, we have simulated a binned spectrum of neutrons incident on a large germanium crystal detector. The low end (0-2 MeV) of the spectrum looks like this (the 0.5 Mev bin width is clearly visible):

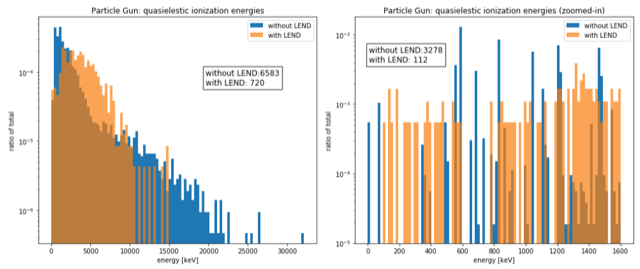

One of the interactions we look at are inelastic/quasielastic scatters: we require a neutron to enter the detector, and find a recoiling nucleus with an emittedphoton, both with the neutron as the parent. In our simulation, this produces distictive line features when we plot nuclear recoil energy (dE/dx + NIEL) vs. dE/dx. You can see this in the blue histogram on the right side below, where the 13.72 and 68.75 keV lines of germanium are clear, as well as a strong ~600 keV line.

These line features (especially the two low energy lines below 200 keV) are clearly visible in the neutron irradiation data from our real detectors, so we are pleased that the simulation reproduces them well.

We have discovered that if we switch from the regular TENDL database for NeutronHP (the blue histograms), to LEND (orange), the results change dramatically. The lower energy gamma lines, which come from PhotonEvaporation ($G4LEVELGAMMADATA) disappear completely, and are replaced by a continuum de-excitation spectrum, with some sort of “edge” around an MeV or so.

Is this a known feature of switching from TENDL to LEND? Is there an additional G4 environment variable we need to set to keep this from happening? We would like to be able to use the LEND database (which improves other simulation results), while keeping PhotonEvaporation active.