_Geant4 Version:geant4-11-01-ref-00

_Operating System: linux lxplus

_Compiler/Version: gcc 12.1

Dear experts/users,

I’m developing simulations to study the deposited energy distributions of gammas produced by neutron captures on natural liquid argon. The simulations run fine and I get most of the gammas that I supposed to get. However the energy spectrum show major discrepancies with respect to the expected intensities.

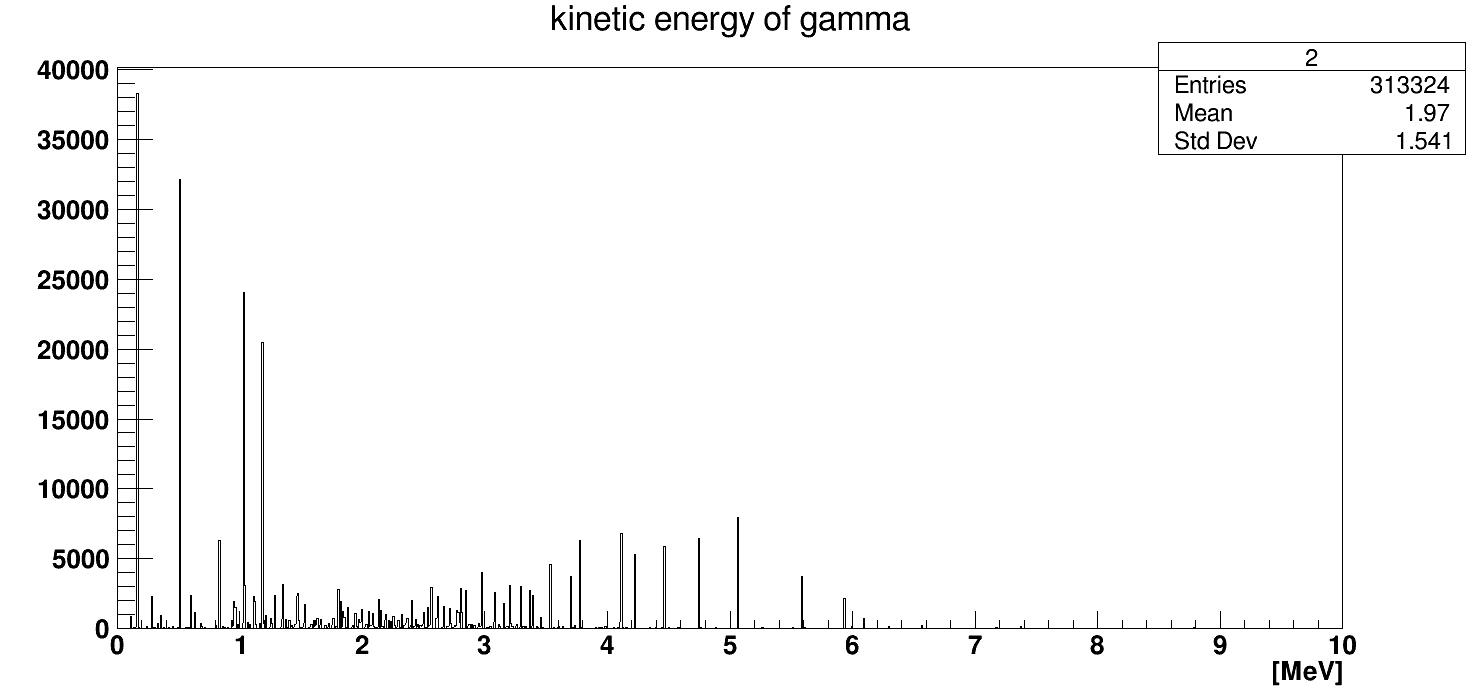

To understand what is going on I’m using the Hadr03 example and G4_lAr as material I obtain this energy spectrum for 100k thermal neutron captures:

The highest intensity corresponds to the 167 keV gamma, but the value is about 38k instead of ~80k (100k thermal neutrons simulated). The other lines with about 50% of probability are 4745 keV and 1187 keV, but we have only ~40k for the 1187 line and only 6k for the 4745. So in some cases a factor ~2 and in other a higher discrepancy.

I’m using the Hadr03 example with G4HadronPhysicsFTFP_BERT_HP. This is what is printed for the relevant options:

UseOnlyPhotoEvaporation ? 1

SkipMissingIsotopes ? 0

NeglectDoppler ? 0

DoNotAdjustFinalState ? 1

ProduceFissionFragments ? 0

UseWendtFissionModel ? 0

UseNRESP71Model ? 0

Any idea of what could be the problem?

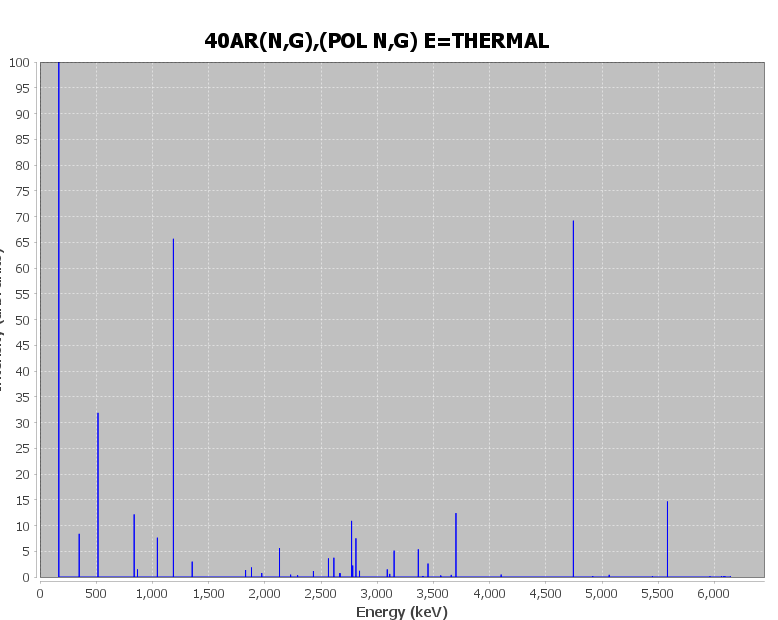

Attached the expected intensities for Ar40 and Ar36.

ng_E_thermal.pdf (55.1 KB)

ng_pol_n_g_E_thermal.pdf (55.3 KB)

Thanks in advance!

Luis

The test_lAr.mac look like this:

Macro file for “Hadr03.cc”

/control/verbose 1

/run/verbose 1

/testhadr/det/setMat G4_lAr

/testhadr/det/setSize 1000 m

/process/had/verbose 1

/process/em/verbose 0

/run/initialize

/gun/particle neutron

/gun/energy 25 meV

/process/list

/process/inactivate hadElastic

/analysis/setFileName nCapture

/analysis/h1/set 2 1000 0. 10 MeV #gamma

/analysis/h1/set 8 100 0. 70 keV #nuclei

/analysis/h1/set 11 100 0. 70 MeV #Q

/analysis/h1/set 12 100 0. 15 MeV #Pbalance

/run/printProgress 10000

/run/beamOn 100000