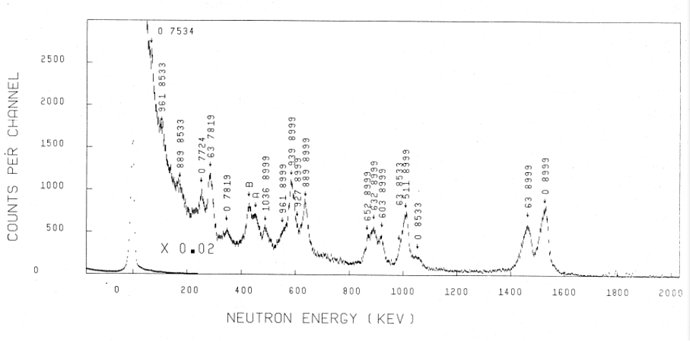

I’m running a Geant4 simulation to detect photoneutrons from targets illuminated by low energy (7-20 MeV) gamma rays. To validate results I have set up a geometry which duplicates one for which experimental data exists. In the experiment, a monoenergetic, collimated horizontal beam of gamma rays strikes a 2.54 cm cube of material in air and photoneutrons are detected by a cylindrical active volume (4.84 cm diameter, 15 cm length) 3He ionization chamber neutron detector. The detector symmetry axis is parallel to the beam direction and offset horizontally by 5.8 cm. An example of a spectrum using a Ni(n,gamma) source (8.999, 8.533, 7.819, 7.724, 7.534 MeV gamma rays) on a 209Bi target is shown here.

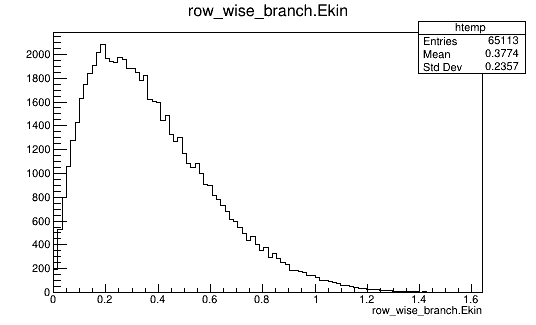

To validate the model I have modified exampleB4a, from the Geant4 distribution, to make an identical geometry and used the general particle source to generate the gamma ray beam. Data is collected when a neutron intersects the detector volume. I use gtools Root analysis tools to store various properties of the emitted neutron. I am running Geant4 10.5 patch 01 on an i7 PC with 16GB RAM and 64-bit Linux (Fedora 30). I have tried four different physics lists; QBBC, QGSP_BIC_HP, Shielding, ShieldingLEND. An example of the 209Bi(gamma, n) neutron spectrum for one billion 8.999 MeV gamma rays for QBBC (QGSP_BIC_HP and Shielding give the same results within statistical uncertainty) is shown in the following figure. The spectrum looks like an evaporation spectrum, with an endpoint roughly equal to the highest energy expected based on Q value (1.532 MeV).

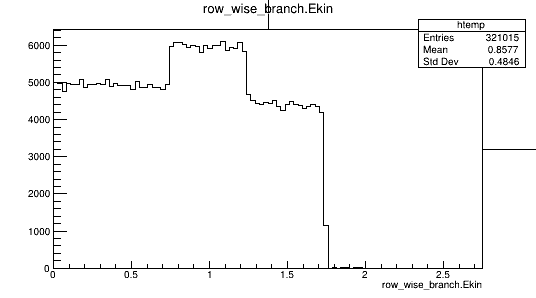

An example of the corresponding spectrum for ShieldingLEND using 2 billion incident gamma rays is shown in the following figure. It is dramatically different from the other three physics lists.

To compare the simulated and experimental spectra, corrections for beam time and detector intrinsic efficiency must be made. Beam time for the experimental spectrum was ~604800s, whereas the simulation (10^9 gammas) corresponds to ~345 s of beam time. Detector intrinsic efficiency, ~5.7015x10^-4 at 1 MeV neutron energy, is not included in the Geant4 simulation. When these are taken into account, we should have Spectrum(simulation) = Spectrum(experiment) * 1.498 if the model and experiment agreed. We have Spectrum(experiment) *1.498=100354 from experiment after correction, Spectrum(simulation)=65113 from QBBC and Spectrum(simulation)=160508 for ShieldingLEND. More importantly, none of the four physics lists give spectral shapes that even vaguely resemble the experimental data. (Variation of detector resolution - 15 to 35 keV - and efficiency - 0.95 to 0.80 - over the neutron energy range are small and have been neglected.)

The absolute count rate discrepancy does not bother me as much as the complete inaccuracy of the neutron spectral shape, regardless of model. I would have expected a set of delta functions (or only one if excited states of the residual nucleus have been ignored), somewhat broadened by scattering. Similar results are obtained at 9.719 MeV gamma ray energy. Should this be reported as a bug or is it already widely known in the Geant4 community?